Beyond Automation.

Into Autonomous Execution.

NerdAgent is not another AI tool.

It is a full-stack Agentic AI Operating System (AI OS) designed to plan, decide, and execute not just respond.

What you get

- Autonomous agents that reason + act

- Multi-agent systems that collaborate

- Real-time execution across your existing stack

- Production deployment in minutes, not months

From AI Outputs

From AI Outputs

The Execution Gap

The Engineering Bottleneck

Fragile Automation

The No-Code Trap

What NerdAgent Actually is

NerdAgent provides a unified runtime environment where:

- Agents are first-class compute units

- Workflows are dynamic graphs (not static flows)

- Memory is persistent + queryable

- Execution is event-driven + API-connected

How NerdAgent Works

(AI OS Architecture)

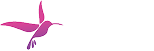

The 8-Layer AI OS Stack

Layer 1

Infrastructure Layer

Integrate AI into existing systems with ease, when needed.

The compute foundation

APIs (REST, GraphQL)

GPU / TPU / Cloud

Data Lakes / Warehouses

Orchestration Engines (Airflow, Prefect)

Storage (S3, GCS)

Monitoring (Prometheus, Grafana)

What this means

NerdAgent sits above infra, not tied to any provider → cloud, on-prem, hybrid.

Layer 2

Agent Internet Layer

The runtime for autonomous agents.

Multi-agent systems

Agent mesh networks

Execution environments

Embedding stores (Pinecone, Weaviate)

Agent Actions APIs

Key Idea:

Agents are not isolated → they exist in a networked execution fabric.

Layer 3

Protocol Layer

Standardized communication.

A2A (Agent-to-Agent)

MCP (Model Context Protocol)

ACP, ANP, AGP, TAP, OAP

Why it matters:

This enables interoperability + composability of agents.

Layer 4

Tooling Layer

RAG (Retrieval-Augmented Generation)

Vector DBs (Chroma, FAISS)

Function calling (OpenAI tools, LangChain)

Code execution sandbox

Browsing modules

Plugin integrations

Key Insight:

This is where AI becomes actionable, not just generative.

Layer 5

Cognition Layer

Planning (PL)

Decision Making (DM)

Reasoning Engine

Goal Management

Self-improvement loops

Error handling

Guardrails / ethics engine

This is critical:

This layer converts:

input → structured reasoning → executable plans

Layer 6

Memory Layer

Working memory (session context)

Long-term memory

Identity module

Preference engine

Behavior modeling

Goal history tracking

Tool usage history

Why this matters:

Agents evolve → not stateless → context-aware systems

Layer 7

Application Layer

Support agents

Research agents

Document agents

Scheduling bots

E-commerce agents

Security watchdogs

Layer 8

Governance Layer

Enterprise control plane.

Policy engine

Data privacy enforcement

Observability

Logging & auditing

Resource quotas

Trust frameworks

Bottom line:

This makes NerdAgent enterprise-ready by design

Execution Flow

Goal Definition

Input converted into structured objective

Cognition Layer

Planning + reasoning generate execution steps

Orchestration Layer

Tasks distributed across agents

Memory Layer

Context retrieved (history, preferences, knowledge)

Tooling Layer

Input converted into structured objective

Agent Collaboration

Agents communicate via A2A protocols

Governance Layer

Policies enforced + actions logged

Response + Action

Output + real-world execution

Core Capabilities

(Powered by AI OS)

Document AI

OCR + LLM extraction + summarization

Memory & Context

Short-term + long-term vector memory

Multi-Model Orchestration

GPT-4 / Claude / Gemini switching

Workflow Automation

Trigger actions across 1000+ tools

One-Click Deployment

GitHub → AWS/Azure/GCP

Security & Guardrails

PII masking + policy enforcement

For Developers & Power Users

Full Control When You Need It

API-first architecture

Inject custom Python / JS logic

Real-time low-latency execution

Extend via plugins, tools, functions

Key Positioning

Start no-code → scale to full engineering controlReal-World Use Cases

Contact Centers → Automated support agents

Healthcare → Patient assistants + analysis

Finance → Risk & fraud automation

Telecom → Network intelligence agents

Dev Teams → AI feature deployment

Frequently Asked Questions

How is NerdAgent different from a chatbot or single AI model?

Traditional chatbots respond only to direct prompts using a single model. In contrast, NerdAgent is an AI Operating System for agents. It runs multiple agents and models in parallel, each with its own goal, and orchestrates them to solve complex tasks end-to-end. It also maintains state and memory across interactions. In short, NerdAgent builds autonomous workflows, not just single-shot answers.

What kind of memory does NerdAgent use?

Can I train or fine-tune the models within NerdAgent?

What integration options are available?

How do you ensure security and compliance?

Ready to transform your

AI initiatives into agentic workflows?

Get started with NerdAgent today

Why Teams Choose

NerdAgent

Reduce operational overhead

Faster decision cycles

Lower implementation cost

Scale AI without infra complexity

Improve CX with autonomous systems

Get started

- 243 Willis Ave, Mineola, NY, 11501-2432

- +1 (781) 953-0902

© 2026 NerdAgent.ai